- Blog

- Ibm bi tools

- Gravostyle 5 on linux

- Corsair vengeance 1500 software versions

- Excel data analysis add in mac office 365

- Free helicopter simulator

- 2018 best flac converter for windows pc

- Torrent elder scrolls skyrim mac

- How to page setup borderless in excel mac os

- Mokee gps maps free download

- Convert pdf file to microsoft word online free

- Windows 10 outlook account settings

- Utorrent mac download free

- Norton ghost download cnet

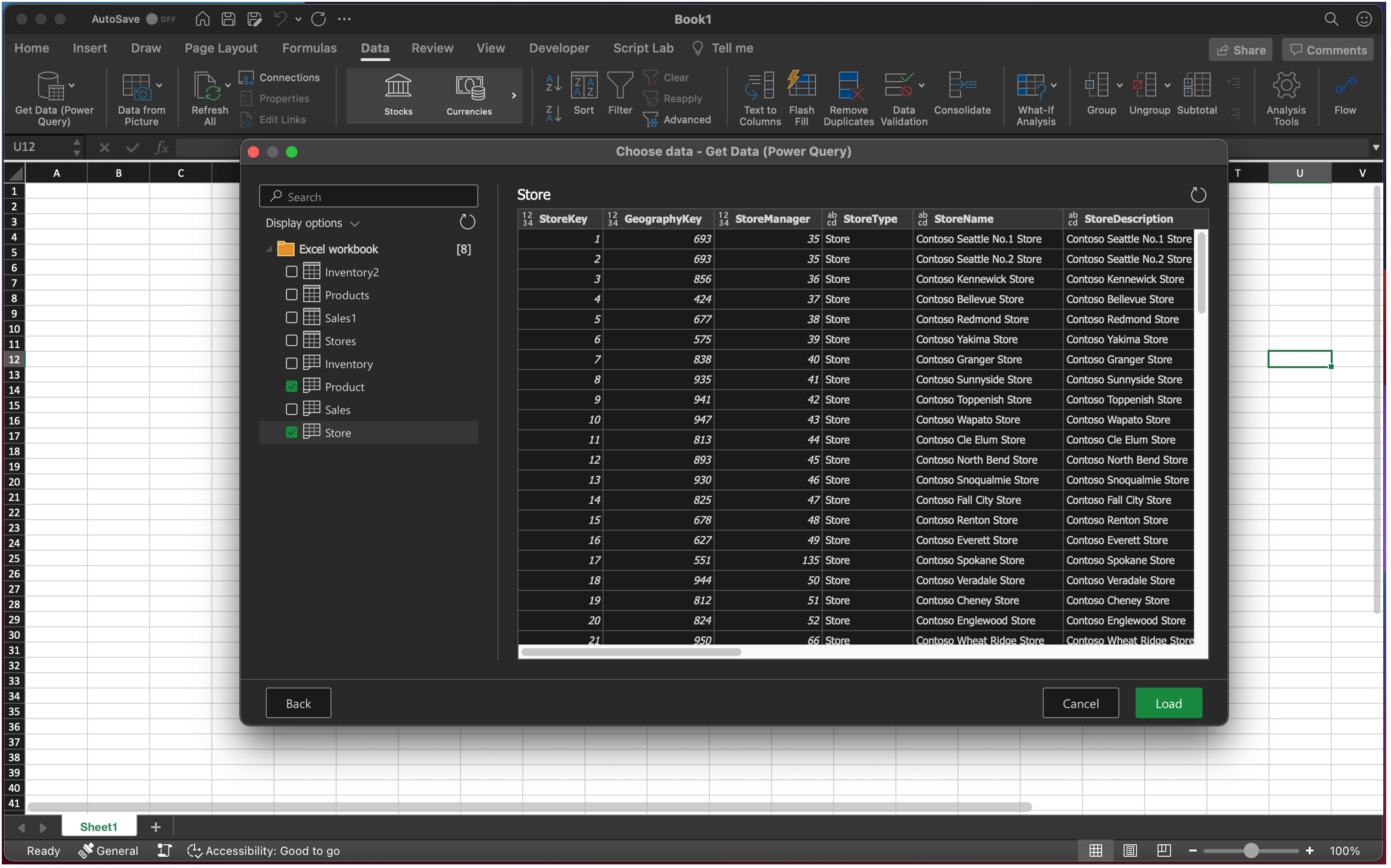

Many customers use a connection to bring external data into Excel as a refreshable snapshot. When working with big data, there are a number of technologies and techniques that can be applied to make these three patterns successful. These can be combined together in a single workbook to meet appropriate needs.

They expect the tools to go beyond embracing the “volume, velocity and variety” aspects of big data by also allowing them to ask new types of questions they weren’t able to ask earlier: including more predictive and prescriptive experiences and the ability to include more unstructured data (like social feeds) as first-class input into their analytic workflow.īroadly speaking, there are three patterns of using Excel with external data, each with its own set of dependencies and use cases. Here, business analysts want to use their favorite analysis tool against new data stores to get unprecedented richness of insight. The sweet spot for Excel in the big data scenario categories is exploratory/ad hoc analysis. There are a variety of different technology demands for dealing with big data: storage and infrastructure, capture and processing of data, ad-hoc and exploratory analysis, pre-built vertical solutions, and operational analytics baked into custom applications. This calls for a more mature understanding of the needs and technologies to create the best fit. When they need to build a mission critical system that requires ACID transactions, a robust query language and enterprise-grade security, relational databases usually fit the bill quite well, especially as relational vendors advance their offerings to bring some of the benefits of new technologies to their existing customers. Novel business value-Between this principle and the previous one, if a data set doesn’t really change how you do analysis or what you do with your analytic result, then it’s likely not big data.Īt the same time, savvy technologists also realize sometimes their needs are best met with tried and trusted technologies.

Innovative types of analysis-Doing the same old analysis on more data is generally a good sign you’re doing scale-up and not big data.The most promising aspect of big data is the innovation that allows a choice to trade off some aspects of a solution to gain unprecedented lower cost of building and deploying solutions. Technically this is accurate, however, many of these solutions rely on expensive scale-up machines with custom hardware and SAN storages underneath to get enough horsepower. Cost-effective processing-As we mentioned, many of the vendors claim they’ve been doing big data for decades.High variety-Embraces the ability for data shape and meaning to evolve over time.High velocity-Arriving at a very high rate, with usually an assumption of low latency between data arrival and deriving value.High volume-Both in terms of data items and dimensionality.On the Excel team, we’ve taken pointers from analysts to define big data as data that includes any of the following:

They then compare notes and learn that they are in complete disagreement.

Each man feels a different part, but only one part, such as the tail or the tusk. The wide range of interpretations sometimes reminds us of the old parable of the blind men and an elephant, where a group of men touch an elephant to learn what it is. And we’ve heard from vendors who claim to have been doing big data for decades and don’t see it as something new. We’ve heard from some folks who thought big data was working two thousand rows of data. It is therefore unsurprising that some folks have come up with wildly different ways to define what “big data” means. In talking with Excel users, it’s obvious that significant confusion exists about what exactly is “big data.” Many customers are left on their own to make sense of a cacophony of acronyms, technologies, architectures, business models and vertical scenarios. One of the great things about being on the Excel team is the opportunity to meet with a broad set of customers.